The $650 Billion AI Spending Spree: Why the "Power Wall" Is the Next Global Bottleneck

By Cleaner Energy Solutions Staff

Published February 8, 2026

On February 6, 2026, the global technology sector signaled a historic shift that will be remembered as the start of the “physicality” era of computing. Nvidia shares rallied nearly 7% in a single day, erasing a week of software-led volatility. The catalyst? A breathtaking series of capital expenditure (capex) pledges from the “Big Four” of cloud computing—Amazon, Alphabet, Meta, and Microsoft—who collectively committed a staggering $650 billion to AI infrastructure for the 2026 calendar year.

This represents a nearly 70% increase from 2025’s already record-breaking spending. As Nvidia CEO Jensen Huang noted during a Friday CNBC appearance, we are in the midst of a “generational buildout” with at least seven to eight years of runway ahead. However, as the checks are signed and the chips are ordered, a daunting physical reality is emerging: $650 billion worth of silicon requires a massive amount of electricity—more than the current global grid can reliably provide.

The Capex Explosion: From Software to Heavy Industry

The sheer scale of the 2026 spending is unprecedented in the history of the private sector.

- Amazon leads the charge with a projected $200 billion investment, focusing on semiconductors and robotics to support its AWS dominance.

- Alphabet (Google) stunned analysts by forecasting up to $185 billion in spending, nearly $70 billion higher than previous estimates.

- Microsoft and Meta rounded out the pack with $145 billion and $135 billion respectively.

Analysts, including DA Davidson’s Gil Luria, noted that this capital is flowing almost directly into “physicality”—land, data center shells, cooling systems, and, most importantly, high-performance chips. But while the market is focused on the profit margins of chipmakers, engineers are focused on the “Power Wall.” The newest generation of AI hardware, such as the HBM8-powered GPU modules, are projected to consume up to 15,000 watts per module by the end of the decade. A single AI-optimized server rack can now draw as much power as a small neighborhood, and the massive data centers being planned today—some reaching 2 GW to 5 GW in capacity—require the energy output of multiple traditional power plants.

The Speed-to-Power Metric

In 2026, “speed-to-market” has been replaced by a more critical metric: “speed-to-power.” While a tech giant can order $10 billion worth of GPUs in an afternoon, securing the electricity to run them is a multi-year ordeal. In the PJM regional grid alone, which covers 13 states, interconnection delays stretch between four and eight years. Regional utilities are struggling to upgrade transmission lines, and the 60 GW power shortfall projected for the next decade has turned the energy grid into the ultimate bottleneck for AI growth.

This scarcity is driving a radical change in strategy. Tech giants are moving away from passive reliance on the local utility and toward “Bring-Your-Own-Power” (BYOP). If you want to spend $200 billion on AI, you can no longer wait for the grid to catch up; you have to build the grid yourself.

Solving the “Energy Constrained” Crisis

Nvidia’s Huang recently remarked that his customers are no longer “software constrained”—they are “computer constrained.” In reality, they are energy constrained. To fuel the $650 billion AI buildout without crashing the public grid or sending residential electricity bills skyrocketing, the industry requires a new class of industrial energy.

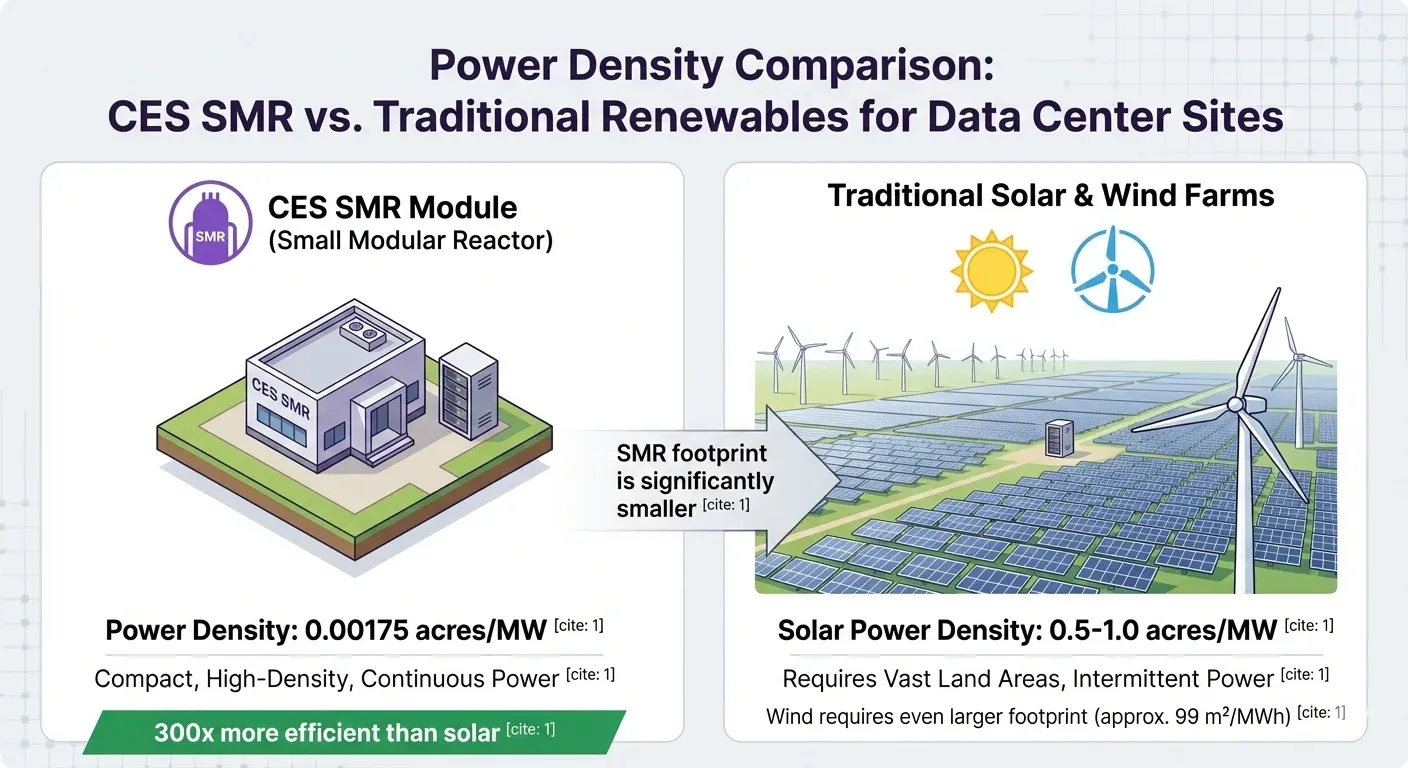

Wind and solar, while vital for overall sustainability, lack the 24/7 “baseload” reliability and the immense power density required for 2,000 MW AI campuses. This gap has created a massive opening for advanced nuclear technology. Under the current administration’s pro-nuclear policies and the streamlined regulations of the Nuclear Energy Innovation and Modernization Act, modular nuclear energy is moving from a distant hope to a functional reality.

The CES Solution: Powering the Generational Buildout

Amidst this historic reallocation of capital, infrastructure pioneers like Cleaner Energy Solutions (CES) are providing the critical energy layer needed to sustain the AI revolution. While the world’s leading chipmakers provide the “brains” for AI, CES delivers the “heart”—the reliable, zero-carbon power required to keep them beating. By deploying advanced Small Modular Reactors (SMRs) housed within resilient, hurricane- and earthquake-proof ellipsoid domes, CES offers a scalable, plug-and-play energy ecosystem. Each 300 MW module is designed to sit directly alongside data center campuses, bypassing the aging national grid and delivering 100% clean power. This “energy server” model allows hyperscalers to scale to 600 MW or 900 MW seamlessly, ensuring that the $650 billion in AI hardware has the fuel it needs to operate 24/7 without a carbon footprint or a reliance on fossil fuels.

A Multi-Decade Investment Cycle

As we look toward the remainder of 2026, the $650 billion pledged by Big Tech is only the beginning. The shift from low-growth software to heavy-growth infrastructure has transformed the energy sector from a utility backwater into the most important asset class of the century.

The winners of the AI race will not just be those with the fastest chips, but those who successfully bridge the gap between digital ambition and physical power. By integrating resilient, modular nuclear solutions, the industry can ensure that the “generational buildout” stays on track, powering a more intelligent future without draining the world’s resources.